Deploying a Metro Storage Cluster across two sites using HUAWEI OceanStor V5/ V5 F HyperMetro and VMware vSphere

Article ID: 342626

Updated On:

Products

VMware vSphere ESXi

Issue/Introduction

This article provides information about deploying a Metro Storage Cluster across two sites using HUAWEI OceanStor V5/ V5 F HyperMetro and VMware vSphere.

Environment

VMware vSphere ESXi 6.5

VMware vSphere ESXi 6.0

VMware vSphere ESXi 6.7

VMware vSphere ESXi 7.0

VMware vSphere ESXi 5.5

VMware vSphere ESXi 6.0

VMware vSphere ESXi 6.7

VMware vSphere ESXi 7.0

VMware vSphere ESXi 5.5

Resolution

HUAWEI OceanStor V5/ V5 F and HyperMetro

The OceanStor V5/ V5 F converged storage platform is newly developed by Huawei Technologies Co., Ltd (Huawei for short), include mid-range and high-end storage systems. It meets medium and large-sized enterprises' storage requirements for mass data storage, speed data access, high availability, high utilization, energy saving, and ease-of-use.

HyperMetro delivers the active-active service capabilities based on two storage arrays. Data on the active-active LUNs/File Systems at both ends is synchronized in real time, and both ends process read and write I/Os from application servers to provide the servers with non-differentiated parallel active-active access.

Huawei OceanStor V5/ V5 F HyperMetro supports both SAN and NAS.

Minimum Requirements

These are the minimum system requirements for a vMSC solution with HyperMetro:

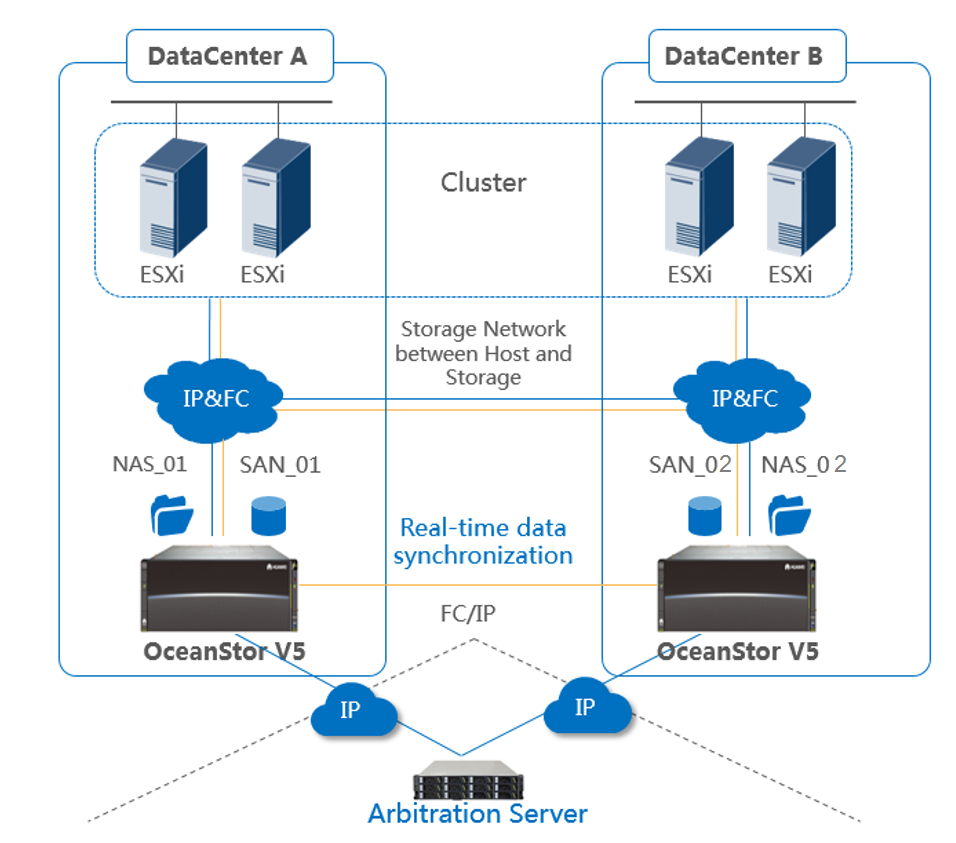

Figure 1-1 OceanStor V5 HyperMetro Configurations

In HyperMetro configurations:

HyperMetro supports arbitration by pair or by consistency group. In the event of link or other failures, HyperMetro provides two arbitration modes (Only one Arbitration server required for both Block and File in a HyperMetro Domain):

Recommendations and Limitations

It’s recommended that the Host LUN ID from both storage systems should be the same for same LUN.

It’s recommended that the user mapping the SAN_02 device to ESXi host only after the Pair is success configured. User can use the rescan command to detect the new path, paths are detected automatically by UltraPath or VMware NMP. There might be some delay in automatic detection.

A certified configuration of OceanStor V5 is available, and is listed in the Broadcom Compatibility Guide

Tested Scenarios

This table below shows uniform host access topology, outlines the tested and supported failure scenarios when using a Huawei OceanStor V5 Storage Cluster for VMware vSphere:

The OceanStor V5/ V5 F converged storage platform is newly developed by Huawei Technologies Co., Ltd (Huawei for short), include mid-range and high-end storage systems. It meets medium and large-sized enterprises' storage requirements for mass data storage, speed data access, high availability, high utilization, energy saving, and ease-of-use.

HyperMetro delivers the active-active service capabilities based on two storage arrays. Data on the active-active LUNs/File Systems at both ends is synchronized in real time, and both ends process read and write I/Os from application servers to provide the servers with non-differentiated parallel active-active access.

Huawei OceanStor V5/ V5 F HyperMetro supports both SAN and NAS.

Minimum Requirements

These are the minimum system requirements for a vMSC solution with HyperMetro:

- Huawei OceanStor V500R007C00 storage system or newer.

- HyperMetro is a value-added feature that requires a software license for use on both OceanStor V5/ V5 F arrays.

- Huawei OceanStor V5/ V5 F should be connected with ESXi via FC, iSCSI or NFS protocol.

- ESXi 5.1 and later.

- Huawei UltraPath (21.0.2 or newer) or VMware NMP.

- Support Host access in uniform (recommended) topology or non-uniform topology.

Figure 1-1 OceanStor V5 HyperMetro Configurations

In HyperMetro configurations:

- SAN_01 and SAN_02 on OceanStor V5 arrays are Read/Write accessible to hosts.

- Hosts could read/write to both devices in a HyperMetro Pair.

- SAN_02 devices assume the same external device identity (geometry, device WWN) as their SAN_01.

- This shared identity causes the SAN_01 and SAN_02 devices to appear to hosts(s) as a single virtual device across the two arrays.

- NAS_01 and NAS_02 form a NAS HyperMetro pair.

- NAS_01 and NAS_02 share all the same file system attributes, including logical port address, access control list and etc.

- If NAS_01 malfunctions, NAS_02 automatically takes over services without data loss or service interruption, and vice versa.

HyperMetro supports arbitration by pair or by consistency group. In the event of link or other failures, HyperMetro provides two arbitration modes (Only one Arbitration server required for both Block and File in a HyperMetro Domain):

- Static priority mode: This mode is mainly used in scenarios where no third-party arbitration servers are deployed. In this mode, you can set either end as the preferred site based on active-active pairs or consistency groups and the other end the non-preferred site.

If the link between the storage arrays or the non-preferred site encounters a fault, LUNs at the preferred site are accessible, and those at the non-preferred site are inaccessible.

If the preferred site encounters a fault, the non-preferred site does not accessible to hosts.

- Arbitration server mode: In this mode, an independent physical or virtual machine is used as the arbitration device, which determines the type of failure, and uses the information to choose one side of the device pair to remain R/W accessible to the host. The Arbitration server mode is the default option.

Note: Either of the following can be used as an Arbitration Server:

- The operating system’s support matrix with Huawei storage, please visit http://support-open.huawei.com/en/ for more details.

- Arbitration server can be built on a physical or virtual machine.

Recommendations and Limitations

It’s recommended that the Host LUN ID from both storage systems should be the same for same LUN.

It’s recommended that the user mapping the SAN_02 device to ESXi host only after the Pair is success configured. User can use the rescan command to detect the new path, paths are detected automatically by UltraPath or VMware NMP. There might be some delay in automatic detection.

A certified configuration of OceanStor V5 is available, and is listed in the Broadcom Compatibility Guide

Tested Scenarios

This table below shows uniform host access topology, outlines the tested and supported failure scenarios when using a Huawei OceanStor V5 Storage Cluster for VMware vSphere:

| Scenario | Operation | Observed VMware behavior |

| Cross-data-center VM migration | Migrate a VM from site A to site B. | No impact. |

| Physical server breakdown | Unplug the power supply for a host in site A. | VMware High Availability failover virtual machines to other available hosts |

| Single-link failure of physical server | Unplug the physical link that connects a host in site A to an FC or IP switch. | No impact. |

| Storage failure in site A | Unplug the power supply for the storage system in site A. | No impact. |

| All-link failure of storage in site A | Unplug all service links that connect site A's storage array to an FC or IP switch. | No impact. |

| All-link failure of all hosts in site A | Unplug all physical links that connect all hosts in site A to an FC or IP switch. | VMware High Availability failover virtual machines to available site B hosts. |

| Failure of storage replication links | Unplug replication links between sites. | No impact. |

| Failure of storage management network | Unplug network cable from network port of host in site A. | No impact. |

| All-link failure between sites | Disconnect the DWDM links between sites. | Virtual machines in site B hosts are automatically Powered off in site B hosts and Powered on in available site A hosts.(ps: The perfect site is site A) |

| Failure of site A | Power off all devices in site A. | VMware High Availability failover virtual machines to available site B hosts. |

| Failure of site B | Power off all devices in site B. | VMware High Availability failover virtual machines to available site A hosts. |

Feedback

Yes

No